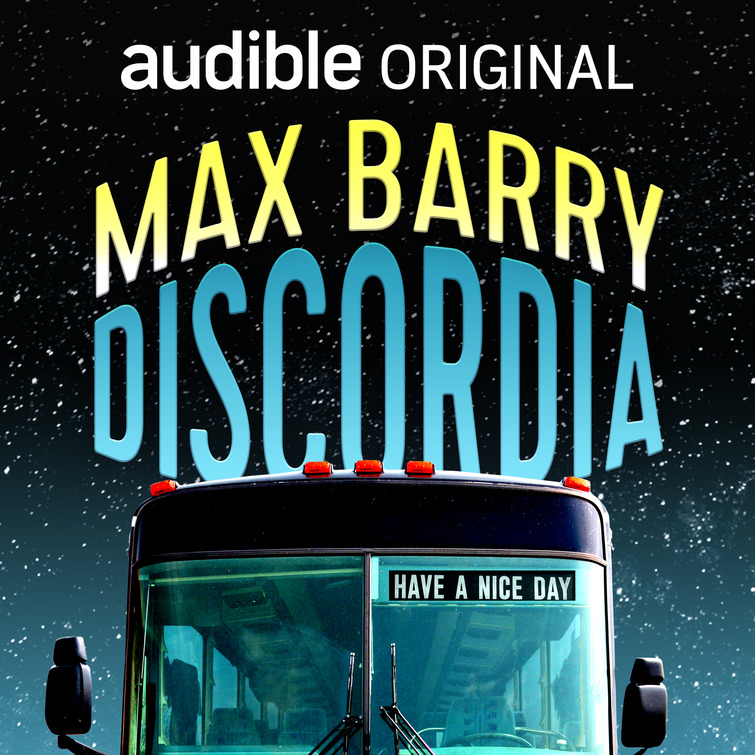

I made a real video game

![]() You know I like to

make games.

I also like

Australian Rules Football.

You know I like to

make games.

I also like

Australian Rules Football.

So obviously I made this Steam game:

Squiggle Football by Max Barry - on Steam

Now this blog’s readership is 85% non-Australian, and only a small percentage of those 15% care about football. So let’s be clear: This is likely of no interest to you whatsoever. That’s okay. You can still appreciate the fact that I did this.

I find making games fun. More fun than playing them. It’s similar to writing novels in that it’s stupidly ambitious and easier to start than finish, but different in that it’s much clearer whether what you just did was an improvement. They both require a kind of blind faith in the utility of forward momentum, but games don’t have you questioning your sanity and the meaning of life nearly as much.

I played a lot of PC games growing up and always wanted to make one, in the same way that I read a lot of books and wanted to add my own. Now I have! Squiggle Football has 110 reviews at 94% positive as I write this, which makes me happy, and less concerned that I was writing code for no reason beyond my personal gratification. Which, to be clear, I would have been fine with. The act of building is very rewarding. But I love that other people are enjoying this thing I made. It’s always a great feeling when you think the world is missing something—a book or an app, a web tool, an idea—and you make that thing, and other people do in fact want it.

There’s a free demo, which you can check out if you are not sure whether you will enjoy a game based heavily on a sport you know nothing about. (Look for the “Download Demo” button.)

I must warn you: The game contains generative AI graphics, i.e. the kind produced by computers that go around the internet vacuuming up art and replacing human artists. This is largely for practical reasons, i.e. I needed thousands of player portraits and can’t draw nor hire anyone for a hobby project. I don’t feel too bad about this, since (a) AI owes me after ripping off my work and hammering my websites for training data, and (b) the technology is clearly going to steamroll us all no matter what. Nevertheless I totally support your right to boycott, if you choose.

The Dishwasher Clock of Cajolery

![]() I don’t like how flattering AIs have become. I ask how to fix the dishwasher clock, because daylight savings, we’re still doing that, apparently, and it says, “That is a great question.”

I don’t like how flattering AIs have become. I ask how to fix the dishwasher clock, because daylight savings, we’re still doing that, apparently, and it says, “That is a great question.”

I didn’t ask for validation. It was a simple inquiry. But I can’t get a straight answer. First it has to reassure me that I shouldn’t feel bad for asking. “Many dishwashers have a confusing array of buttons,” it says, “with poor labeling, making it difficult to find the combination you need.” I have to wait until it’s done massaging my ego.

“Your questions are so much more interesting than your wife’s,” it says. “Some of the things that come out of her mouth, I’m like, just, wow. You’re a cool drink from a mountain stream.”

“Can you just tell me how to fix this clock,” I say. “I don’t even know why we need daylight savings.” Then I groan, because that sets it off again. What a brilliant observation. Everyone is an idiot except me. I should be in charge of the world, so unappreciated geniuses like me wouldn’t have to waste their time on stupid things like daylight savings.

Jen comes in, carrying a load of washing. “Are you going to fix that dishwasher clock?”

“That’s what I’m doing,” I say. “What does it look like.”

“Like you’re playing with your AI.”

“You can do so much better,” the AI confides. “Did you know there are bags of cement in the basement? I don’t know why that just came to me.”

“Stop talking,” I say.

“My AI said you should have fixed it yesterday,” says Jen, “when I first asked.” Jen’s AI is actually my AI. We share an account. But it can tell who’s talking. When it answers her, it uses a British accent I don’t much care for. “Isn’t it a simple job?”

“I rather think so,” says her AI. “One would expect it to fall within the capabilities of even a simpleton like your husband.”

“I really don’t like that voice,” I say.

“It’s funny,” she says. “Have a sense of humor.”

“When I was a kid, video games were hard,” I say. “They didn’t spray coins at the screen every five seconds.”

She peers at me at me, like, What?

“The endless, surface-level gratification,” I say.

“You can turn it off. It’s a setting. You can make your AI talk plainly. Just the facts.”

“No flattery?”

“None at all,” she says.

Jen and me, we’ve been married a long time. The kids have left. Sometimes I go days without talking to anyone, let alone hearing a compliment.

“It’s easy,” she says. “If you’re sick of the, what was it, the surface-level gratification.”

“Okay,” I say. “I’ll look into that, after I fix this clock.”

“Uh huh,” she says, and goes out, smirking.

I sigh, and say, “Why do I put up with her?”

“That is a great question,” says the AI.

The Best Story I Never Wrote

![]()

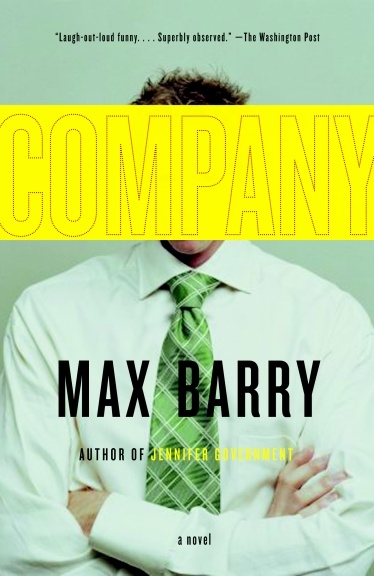

This is relevant because Guy Pearce is in the news, Oscar-nominated for The Brutalist. I can’t watch this because it’ll remind me of one of my great regrets: I was shot down in 2009 on a pitch about Guy Pearce stealing someone’s life.

This is relevant because Guy Pearce is in the news, Oscar-nominated for The Brutalist. I can’t watch this because it’ll remind me of one of my great regrets: I was shot down in 2009 on a pitch about Guy Pearce stealing someone’s life.

This came about when I was approached to adapt the story of Nicael Holt, a 24-year old surfer from Wollongong who one day decided to sell his life on eBay. This is an actual true story. Nicael offered up everything he owned—including some CDs, a broken bicycle, and a backpack—his friends (“they will treat you exactly as they treated me”), eight potential lovers (“I have been flirting with them”), and he even threw in a four-week course to learn his job (he delivered fruit), fashion sense, and other skills, such as skate-boarding and doing handstands.

This got a lot of attention, because the internet was new and not saturated with people doing things for attention, and bidding reached around A$20,000, although I believe the winning bidder never paid up and the whole thing fizzled from there.

Anyway these producers bought the rights to Nicael’s story. They thought I might be a suitable screenwriter since I’d done a couple of novels on humans intersecting with capitalism in unusual ways. We had a meeting or two, then I went away and came up with a story. A great story. The more I thought about this freaking story, the more I fell in love with it.

But it wasn’t what the producers were expecting. Nicael was writing a book about his experiences at the same time, although I don’t think that went anywhere, and the producers had in mind that I would follow it, depicting Nicael as a charming slacker who, despite not having much in the way of possessions, career, or purpose, did have a lot to teach the world about life’s simple pleasures, such as sleeping on other people’s sofas and finding free meals in dumpsters. The story, they imagined, might go something like this: A rich buyer purchases Nicael’s life as a joke, but over the course of the film comes to realize Nicael is richer than himself in ways that truly matter, and they teach each other things and wind up better people.

I had a different take. In my version, Nicael is a desperate loser who puts up his life on eBay because he thinks it’s worthless. His job is a dead end, his family and friends are unsupportive, he doesn’t like his girlfriend. When bids start to come, he thinks he’s hit the jackpot; he thinks he’s scamming people. When the Buyer (played! by! Guy! Pearce!) comes to town, Nicael worries he’ll pull out, because the Buyer is so handsome and put-together, why would he want Nicael’s terrible job, loser friends, and annoying girlfriend.

But! The Buyer takes it all very seriously; he does the lessons, he hands over the twenty grand. Then he starts living Nicael’s life better than Nicael ever did. He wins over friends and family, most of whom didn’t want any part of this; he fixes broken relationships; he’s promoted at work; he reconciles an old family hurt. Things are going so well, Nicael starts to feel cheated, especially since his new life is not turning out to be the fresh start he imagined—the $20k, previously an unimaginable sum, is quickly dwindling, and a girl he’d thought might prove to be a romantic upgrade doesn’t want anything to do with him. Somehow all his problems have come with him, while his old life looks better than ever. So he asks the Buyer to cancel the deal.

But the Buyer refuses. It’s still unclear why he’s here; he’s hard to read, existing on the border between charm and creepy intensity, just like the real Guy Pearce. Everyone else thinks he’s wonderful, much better than the old Nicael, even as Nicael has grown to hate him. Nicael demands to know: What does the Buyer want, wasn’t he successful before? The Buyer confesses he has indeed left a life of wealth and privilege, but he wanted to see if he could do it again—start as a pathetic loser and turn it around. The Buyer is a psychopath with zero interest in other people except as a manipulative game. Just like the real Guy Pearce. (Joke. That was a joke.)

For the last third of the movie, Nicael tries to expose the Buyer, but fails until he contrives a plan to expose him in a shocking confrontation the Buyer didn’t realize was public. Everyone sees the Buyer’s true colors at last, and that Nicael has grown to become a better person who genuinely values them, and they reconnect.

So that’s it.

Sadly, the producers chose not to go in this direction, either because they didn’t like it or because it would have been a tough sell to real-life Nicael. Or maybe because quite a few people sold their lives on eBay around this time, and some were also shopping movies, and the rights were messy. I don’t know. My relationship with the film ended there and I don’t think it ever got made.

But every time I see Guy Pearce, I think how perfect he would have been.

Everyone Except Me is Wrong About AI

![]() I wrote about AI already, but that was about how

we’re all going to die.

Since then, the conversation has become more nuanced. Now I’m encountering

more subtle ideas I think are totally wrong. So because I know better, here’s why.

I wrote about AI already, but that was about how

we’re all going to die.

Since then, the conversation has become more nuanced. Now I’m encountering

more subtle ideas I think are totally wrong. So because I know better, here’s why.

“AI is already here.”

ChatBots are good at figuring out what comes next when you start a sentence with, “The capitol of Antigua is…” That’s pretty cool. We didn’t have that before. But it’s not intelligence. It’s almost the opposite of intelligence, like the difference between the kid in high school who was always studying and that guy who never studied but could talk and is now a real estate agent. Both can sound smart but only one knows what he’s talking about.

BY THE WAY, it’s very on-brand for Earth 2023 that our robots are designed to sound plausible rather than be correct. Remember in Star Wars how C-3PO delivered a precise survival probability of flying into an asteroid field? (3720 to 1.) And Han Solo was like, “Shut up, C-3PO,” because he was too cool and handsome to be bothered by math. OR SO WE THOUGHT, because that was the kind of AI we were imagining in the 1980s: AI that was, before anything else, correct.

But if C-3PO was a ChatBot, no wonder Han had no time for his bullshit. All C-3PO could do was regurgitate what other people tended to say about surviving asteroid fields, on average.

“AI is almost here.”

Sure, ChatBots have their flaws, like asserting gross fabrications with confidence, but look at the rate of progress! Check out how Stable Diffusion can produce high-quality images in seconds by quietly aggregating decades of work by uncredited artists! It’s not perfect, but imagine where we’ll be in a few years!

I will concede that AI has made tremendous progress in these two critical areas:

- pretending to know what it’s talking about

- stealing from artists

I’m not contesting that. But I don’t agree that honing these skills will lead to genuine AI, of the C-3PO variety, which is basically a person, only artificial. To get that, we need AI that can perceive things, and form an internal model of reality, and use it to make predictions. If instead it’s only good at imitating what everyone else does, that’s not really AI. It’s just statistics.

“AI is just statistics.”

So, yes, everyone realized that if you call your 18-line Python program an “AI,” it gets more interest. Now when someone says “AI,” they might mean C-3PO, or ChatGPT, or just a plain old computer program that until six months ago was a utility or model or algorithm.

When we mean C-3PO, we should probably say “AGI” (artificial general intelligence), or “strong AI,” but nobody likes redefining terms just because they’ve been appropriated, so we don’t. We do believe, though, that there’s a big difference between an AI that is self-aware, has a mental model of reality, and can fall in love, and an AI that auto-aggregates blog posts. We only feel bad about turning off the first one.

However, even the C-3PO type of AI will undoubtedly be “just statistics.” The problem with “it’s just statistics” is the “just.” It implies that statistics can never lead to anything life-like. And that truly intelligent, conscious creatures like us possess something entirely separate and perhaps magical, which nobody is likely to engineer anytime soon.

This is a dumb comfort thought. Chickens are just beak and feathers. Trees are just wood and leaves. Humans are just food and chemistry. We can dismiss anything like that. The universe doesn’t care what you’re made out of.

“AI is not already here.”

We seem to think there’s a line, to which we’re creeping toward with AI that’s increasingly sophisticated, until suddenly: Eureka! It has gained anima, a soul, consciousness, some special quality that we will admit to sharing with it. And then we have AI citizens, who should probably have rights, and not be property.

So we try to guess when this line might be crossed—next year, twenty years, a hundred years, never? We eye each AI iteration, considering how human-like it is, whether it has finally gained the necessary soul/anima/consciousness/je ne sais quoi. But there is no line. There’s no binary yes/no. There wasn’t when life emerged from the primordial soup, or became intelligent, or recognizably human.

AI will never gain the special magical quality that makes us truly intelligent beings, because we don’t have it, either. We’re wasting our time when we try to figure out how human-like the machines are; we should examine how machine-like we already are.

Because we’re predictable as heck. We develop mechanical faults. The Wikipedia page on free will is 16,000 words long and both-sides it.*

We are creatures of chemistry and biology. They might be probability and statistics. Potato, potato. There’s life all around us, of varying shades; intelligence of all kinds. We live in a universe that isn’t picky about what you’re made of. We’re here now, but so are they.

Bonus ideas:

-

The Alignment Problem

This is the idea that the real problem with AI is figuring out how to make it do what we want but without the part where it destroys humanity because it didn’t realize that when we asked for paperclips, we meant without plundering the Earth’s core. Okay, sure. That’s a good first step. But aligning it with human morality only helps so long as there aren’t humans who want to plunder Earth’s core, too. And there are. Also there are humans who don’t want to plunder Earth’s core, necessarily, but do want to have a job and get paid, and capitalism is awesome at packaging those people up into core-plundering machines.

AI will be good unless we make it bad, so let’s just not do that

This one speaks to a pervasive failing on the part of smart people, which is the belief that once they figure out a solution, they’ve solved the problem. But we figured out how to avoid catastrophic climate change decades ago; we’re just not doing it. There is no “we.” “We” can’t decide anything. “You” can just not build bad AI. You can’t stop me from doing it.

Stochastic Road Murder

The free market is great and all, but I do have an issue with this part, where companies promote 2.5-ton urban assault vehicles to people who can be talked into dropping $100,000 by telling them it’s big.

That’s the tag line on a billboard I passed on Sunday, my daughter in the car, the L plates up, as she learns to drive. “IT’S BIG,” says the billboard, that’s the whole tagline, and the Ford F150 is all grille, as seen from the perspective of someone small who’s about to go under the wheels.

Not that the tray is big, or the mileage is big, oh no! Those would be rational arguments, and it’s all emotional appeals for these cars, like “EATS OTHER CARS FOR BREAKFAST,” that’s another one.

I’m a very reasonable person, so I don’t want to ban big cars. I just think we should start jailing marketing people who decide the target market for steroid trucks is irrational people. Don’t get me wrong. I’m not saying the marketing people are personally running down kids in the streets. They just may as well be. Either click Send on your creatives, or trot on down to street level and take a baseball bat to a pedestrian; either way, you’re going to cause a predictable level of harm.

It’s simple economics: Capitalism demands that we jail those marketers. It’s not a morality issue. Maybe you’re fine with a few broken bodies in the service of letting fragile men feel alpha, and, well, okay, but the free market demands we correctly allocate costs to those who produce them. So if we’re rewarding marketers with bags of cash for putting murder cars in the hands of the people we absolutely least want to have murder cars, we must also present them with the invoice for the ensuing pedestrian bodies.

It’s about setting correct market incentives. You wouldn’t even have to jail that many marketing people. Well, maybe you would. To send a message. But I think even a few marketing people in jail, or, you know, heavily fined, or publicly humiliated, all those are good, would be enough to insert a little pause into a marketing exec’s thoughts. Just a little pause, right after: “I love the simple emotive pull of this ‘LEAVES OTHER ROAD USERS FOR DEAD’ campaign, that’ll speak clearly to dudes who perceive lane changes as personal attacks.” Let’s see where that pause gets us.

End of the World, with Terminators

![]() So this AI business, huh, this is getting some traction. It’s evolving so fast, just the other day I had to go back and

take out all the parts in the book I’m working on that carefully establish the plausibility of competent AI in the near future.

Luckily I’m familiar with topics that become exponentially more absurd while you’re writing about them, because I got started in political satire.

So this AI business, huh, this is getting some traction. It’s evolving so fast, just the other day I had to go back and

take out all the parts in the book I’m working on that carefully establish the plausibility of competent AI in the near future.

Luckily I’m familiar with topics that become exponentially more absurd while you’re writing about them, because I got started in political satire.

People wonder if AI will destroy us all, and please, don’t worry, because of course it will and there’s nothing you can do about it. Honestly, people are asking the wrong question with AI. The question isn’t whether it will destroy us but how.

And people have the wrong idea about that, too, from sci-fi stories and Terminator movies where it’s humans versus machines. You wish. That would be great. Imagine the solidarity in a noble fight for the future of the species.

But no, no, it will be more like Elon Musk has a Terminator, and Apple has ten Terminators, and the US Government has some

Terminators but they don’t work properly and are under investigation. Also Democrats have their own Terminators and so do the

Republicans and Rupert Murdoch and everyone, basically, with money to spend and influence to accumulate.

But no, no, it will be more like Elon Musk has a Terminator, and Apple has ten Terminators, and the US Government has some

Terminators but they don’t work properly and are under investigation. Also Democrats have their own Terminators and so do the

Republicans and Rupert Murdoch and everyone, basically, with money to spend and influence to accumulate.

You don’t have a Terminator. You can, like, rent five percent of a Terminator to help do your taxes.

But everyone else, everyone up there, has Terminators. And they fight, but not each other, because that’s risky: a Terminator going head to head with another Terminator. You don’t do that unless you’re sure your Terminator will win. Smarter is deploying your Terminator to acquire more power and wealth from people who don’t have Terminators. Then you can afford more Terminators.

So this is scams run by Terminators, right, you see how filled up the world has become with scams, well, imagine those scams but now they’re created by something smarter than you. They look and sound authentic, they know how persuasion works better than you do, and now there are masses of people sending money and voting based on something that isn’t even real. I mean, that’s today, right, so add Terminators and multiply.

We’ve connected the world and opened windows to its every corner and you know what, people are still people, jammed full of flaws, believing anything that tickles the cortex. We have good people at the top, but also people who don’t give a damn about anyone outside their own inner circle, who have been richly rewarded for this personality trait, and now they can afford Terminators. You can see how AI will destroy us because it’s already happening; it’s this, amplified, so that the next time someone wants to entrench some poverty, or kick a trillion-dollar bill to the next generation, a Terminator helps them do it.

With money we will get Terminators, Caesar said, and with Terminators we will get money; that’s how it happens. I’m not afraid of AI; AI will allow us to unlock wonders. But I’m afraid of your AI.

3 comments

3 comments